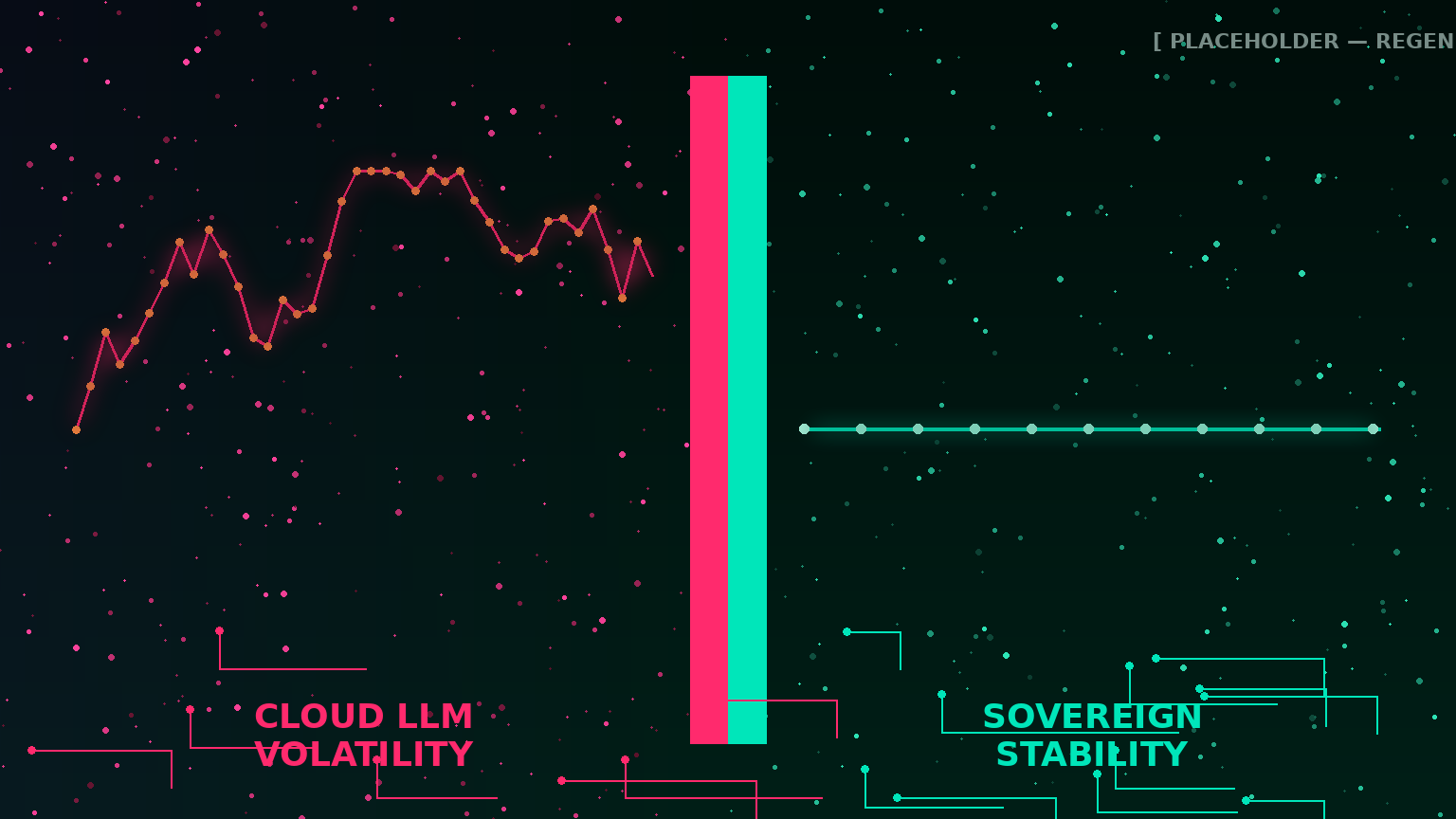

When the Platform Moves: How Sovereign AI Ends the Constant-Change Tax on Cloud LLM Workflows

Anthropic's May 6 SpaceX deal doubled Claude Code rate limits and killed peak-hour throttling — and every team building on Claude immediately had to re-evaluate workflows they had just finished tuning. The cloud LLM treadmill is the hidden tax on cloud AI. On-premise LLM deployment gets you off it.

The Quiet Quitting of OpenClaw: Why Sovereign Agentic AI Is the Only Defensible Path

247K stars in two months, then a wave of users walked away — deleted inboxes, leaked SSH keys, $86/month idle costs. Why on-premise LLM deployment is the only architecture you can trust with autonomous agents in regulated environments.

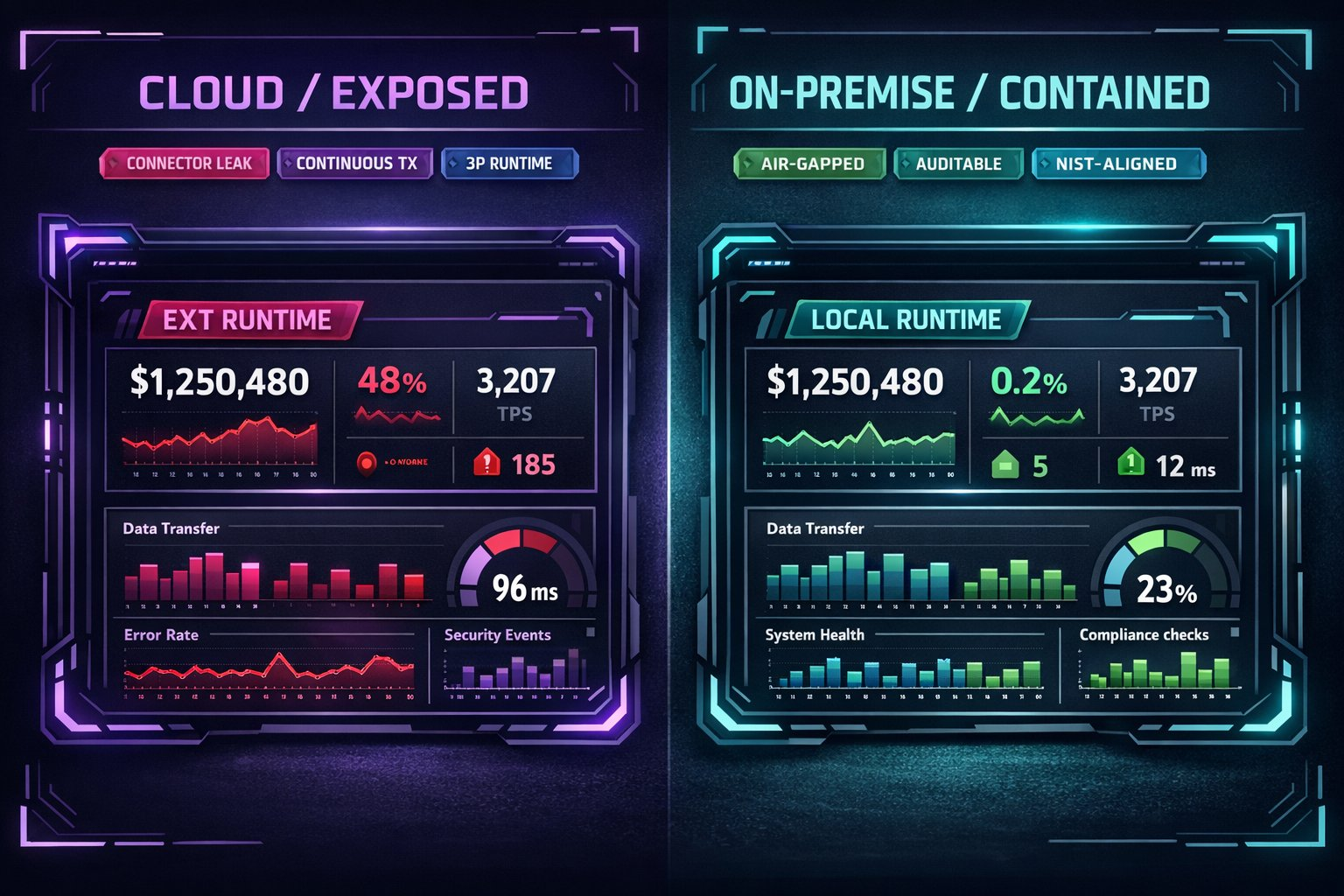

Live Artifacts, Live Exposure: The Sovereign Alternative to Claude Cowork Dashboards

Anthropic's April 2026 Live Artifacts release turns Claude Cowork into a persistent BI layer connected to your apps. For regulated teams, live cloud dashboards mean continuous data exposure. Here's what on-premise LLM deployment looks like instead.

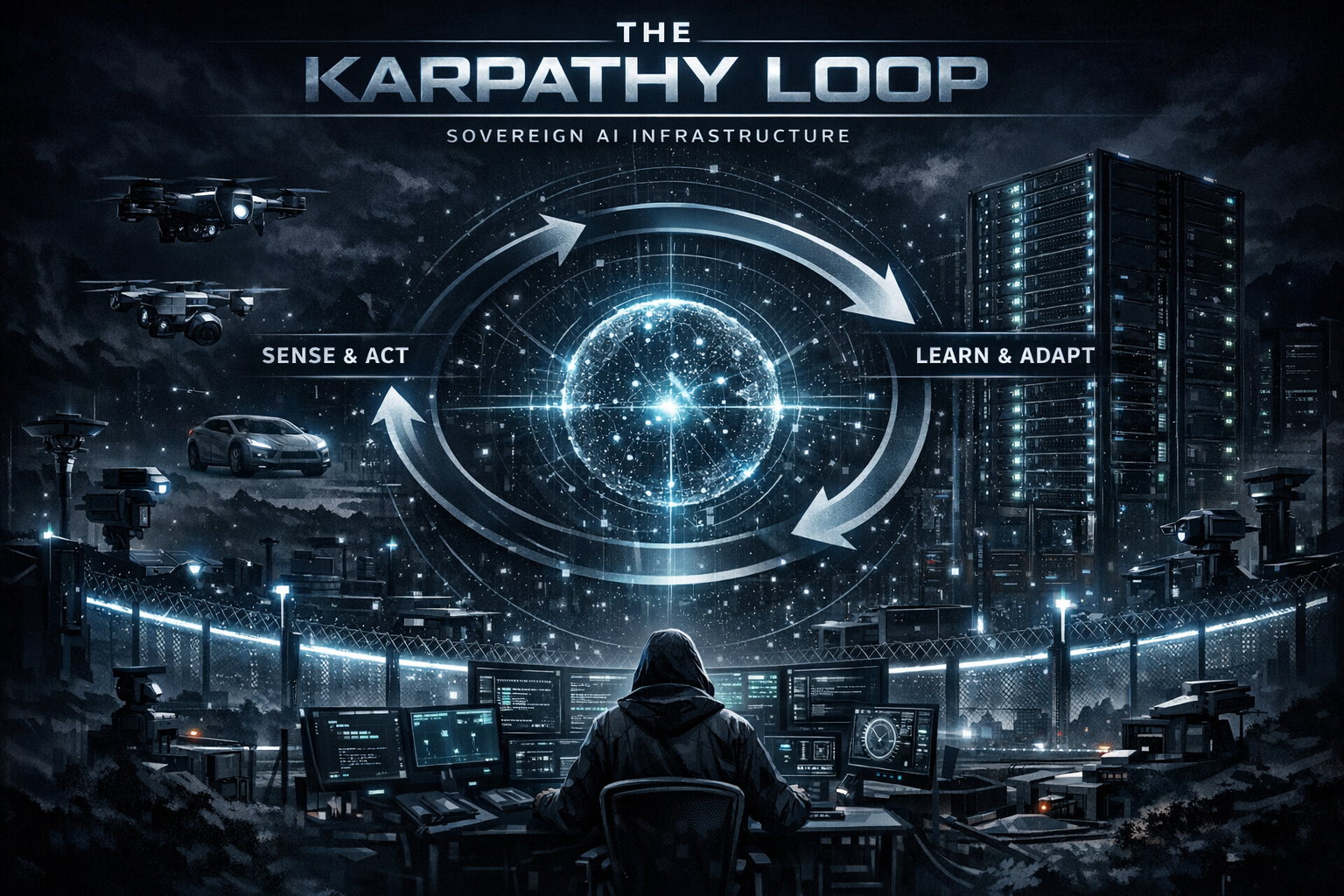

The Karpathy Loop Belongs On-Premise: Safe Autonomous Code Optimization

Karpathy's autoresearch loop surfaced 20 optimizations in 2 days. Shopify got 53% faster template rendering from 93 automated commits. Here's why on-premise LLM deployment is the only defensible container for autonomous agents in regulated environments.

AI Technical Debt Is Compounding. Your Infrastructure Is the Only Fix.

88% of developers report negative impacts from AI technical debt. Technical debt increases 30–41% after AI tool adoption. Sovereign infrastructure is the engineering discipline that prevents AI debt from becoming an organizational crisis.

The Inference Wall Is Here. Is Your AI Stack Ready?

Five structural shifts from March 2026 — inference economics, AI advertising, data center moratoriums, SaaS collapse, and safety posture — reveal why sovereign infrastructure is the only defensible architecture.

The Math Is Catching Up: Why On-Premise AI Hardware Gets Better With Time

TurboQuant and quantization breakthroughs prove on-premise hardware appreciates in capability as software improves. The useful life is 6-8 years, not 3-4.

NIST AI 800-4: The Future of Post-Deployment AI Monitoring

NIST identifies critical gaps in AI monitoring. Here is how your organization can bridge them with sovereign infrastructure.

The Axios NPM Attack: How North Korea Poisoned 100M+ Downloads

A three-hour window. A compromised maintainer account. A RAT payload hidden inside the world's most-used JavaScript HTTP library.

Claude Mythos and the Arrival of Frontier Models

What the next generation of AI means for business agility, and why waiting is the greatest risk of all.

The Claude Code Leak: A Warning on Cloud AI Security Risks

Why relying on cloud-based AI tools creates systemic risks for your company's proprietary data, and why sovereign infrastructure is the solution.

The Compute Cliff: Why Cloud AI is Unsustainable

And why securing on-premise infrastructure now—even with high hardware prices—is the only secure, long-term solution.

Why Small Businesses Are Choosing On-Premise AI Over Cloud

The infrastructure ROI: Predictable costs, no vendor lock-in, and scaling AI systems as your business grows.

What the SEC's 2026 AI Examination Priorities Mean for Your Firm

Audit-ready AI governance: Why documentation matters more than model performance when SEC examiners review your AI systems.

How Medical Practices Deploy AI Without Violating HIPAA

Meeting HHS Section 1557 requirements while protecting patient data — why cloud AI creates compliance exposure for medical practices.

Federal AI Compliance: The New Baseline for Regulated Organizations

How the March 2026 White House AI Framework makes sovereign AI infrastructure essential for healthcare, legal, finance, and public services.