Live Artifacts, Live Exposure: The Sovereign Alternative to Claude Cowork Dashboards

Anthropic's April 2026 Live Artifacts release turns Claude Cowork into a persistent BI layer connected to your apps. For regulated teams, a live cloud dashboard is a continuous data stream leaving your perimeter.

On April 20, 2026, Anthropic announced that Claude Cowork can now build Live Artifacts — dashboards and trackers that connect to your apps and files and automatically refresh with current data every time you open them. For teams evaluating Sovereign AI Infrastructure, on-premise LLM deployment, and Secure AI for Regulated Environments under the March 2026 White House AI Framework, this is not just a product update. It is a structural shift in how much of your operational data flows through a third-party model provider — and it deserves a hard look before you connect it to anything that matters.

Live Artifacts are a genuinely impressive piece of engineering. They are also, for any organization in a regulated sector, a governance problem disguised as a productivity feature. This post breaks down what Anthropic shipped, what it means for your data footprint, and what a sovereign alternative actually looks like when built on infrastructure you control.

What Anthropic Actually Shipped

Claude's prior artifact feature was a snapshot model. You could generate a chart, a mini-app, or a tracker inside a conversation, edit it, and export it — but it was a finished file. Opening it tomorrow gave you the same numbers you saw today. Live Artifacts change the architecture. They are persistent, data-connected surfaces that re-query their upstream sources every time they are opened. Anthropic's public messaging frames them as a lightweight business-intelligence layer inside Cowork: build a dashboard once, reuse it indefinitely, and let Claude refresh the data.

The technical mechanism is straightforward. Live Artifacts rely on Cowork's existing connector fabric — the set of authenticated integrations to external services like Gmail, Google Drive, Google Calendar, Notion, Vercel, Slack, and a growing list of SaaS platforms. When you open a Live Artifact, the underlying model issues fresh tool calls to those connectors, ingests the returned data, and renders it into whatever visualization or summary the artifact was designed to produce. The artifact itself is a small program; the connectors are the plumbing; the model is the runtime.

For a solo operator or a small team with no compliance exposure, this is a clean story: describe what you want, connect the tools, and you have a live operating dashboard without writing a line of code. For an organization with patients, clients, counterparties, material non-public information, or anything else a regulator cares about, the same story reads very differently.

The Data Footprint Problem

A Live Artifact is not a static file living on your laptop. Every refresh round-trips through Anthropic's infrastructure. When you open a dashboard that summarizes your inbox, the contents of the retrieved emails are loaded into a model context running in the provider's environment. When a tracker pulls from your Notion workspace, the queried pages transit the same path. When a sales pipeline dashboard connects to your CRM, the customer records follow. The artifact is reusable because the connection is persistent — and the connection is persistent because the connector credentials, the data itself, or both, are continuously accessible to the system that renders the view.

This is not a criticism of Anthropic's security posture. It is a description of what the product does, because that is what the product is for. The critical question is whether the data moving through that path is data you are allowed — legally, contractually, or strategically — to have moving through a third-party inference environment on a continuous basis. The answer for most regulated organizations is some version of "not without a lot of work."

- Healthcare teams have to reason about whether PHI surfaced through a connector query meets HIPAA's definition of a permitted disclosure, and whether the Business Associate Agreement with their model provider covers the specific data flows the Live Artifact triggers.

- Legal and professional services firms face privilege and confidentiality duties that do not map cleanly onto cloud-mediated continuous access to client files.

- Financial services firms have to consider SEC examination expectations around AI governance, material non-public information handling, and the 2026 scrutiny of "AI washing" — where a live dashboard summarizing portfolio data is, in a regulator's eyes, a model-assisted decision surface.

- Public sector and research teams working under federal data classification, export control, or IRB requirements have clear constraints on which environments sensitive records are permitted to transit.

None of this makes Live Artifacts useless for these teams. It means the feature needs to be evaluated against the data sovereignty boundary your organization is actually required to maintain — not against a generic productivity benchmark.

Why "Live" Compounds the Exposure

The previous generation of AI artifacts had a natural governance property: they were one-time. A team could run a single, reviewed query, export the result, and move on. Logging, approval, and redaction were possible because each interaction had a clear start and end. Live Artifacts replace that pattern with a persistent query surface. The dashboard exists. It refreshes. It does so whenever a user opens it, on whatever schedule the user opens it.

Three governance problems follow directly from that shift:

- The boundary of "what was sent to the model" becomes continuous. Instead of a finite set of reviewed prompts and attachments, you have an open-ended stream of tool calls against live data sources. Retrospective audit of exactly what was ingested — required under the NIST AI RMF MEASURE function and most internal AI governance programs — becomes significantly harder.

- Connector scope creep is invisible. A connector authorized for one workflow is implicitly available to every future Live Artifact built on the same account. An analyst building a sales dashboard on Monday has the same access footprint as an intern building a "team mood" tracker on Friday. Without discipline, the least-privilege principle erodes quickly.

- Vendor-side changes silently change your exposure surface. When the provider updates the underlying model, adds new tools to a connector, or expands what a given integration returns by default, your dashboard's behavior changes too. In a regulated environment, any change to how sensitive data is processed is supposed to trigger review. Here it happens as a background improvement you may not be notified about.

Each of these is manageable with governance effort. None of them are solved by the feature itself.

The Regulatory Context You're Building Inside

This release lands in the middle of the most active AI regulatory period the United States has ever seen. The March 2026 White House National AI Policy Framework established federal preemption of state AI laws and sector-specific oversight for healthcare, finance, and critical infrastructure. The NIST AI RMF 1.1 update, also released in March 2026, elevated the MEASURE function for continuous bias, fairness, and performance monitoring. NIST AI 800-4, published the same month, made explicit that post-deployment monitoring requires transparency and control that opaque cloud services structurally cannot provide. The SEC's 2026 examination priorities name AI governance and AI washing as focus areas. HHS Section 1557 remains in force for clinical AI non-discrimination.

Against that backdrop, the defensibility question is not "is this cloud feature secure?" — it is "can we demonstrate, to an auditor, exactly what data our AI systems accessed, when, under whose authority, and with what controls on the output?" A Live Artifact running on third-party infrastructure is capable of being documented, but the documentation work is continuous, and the provider controls most of the variables. A Live Artifact running on infrastructure you own inverts that relationship entirely.

What a Sovereign Dashboard Looks Like

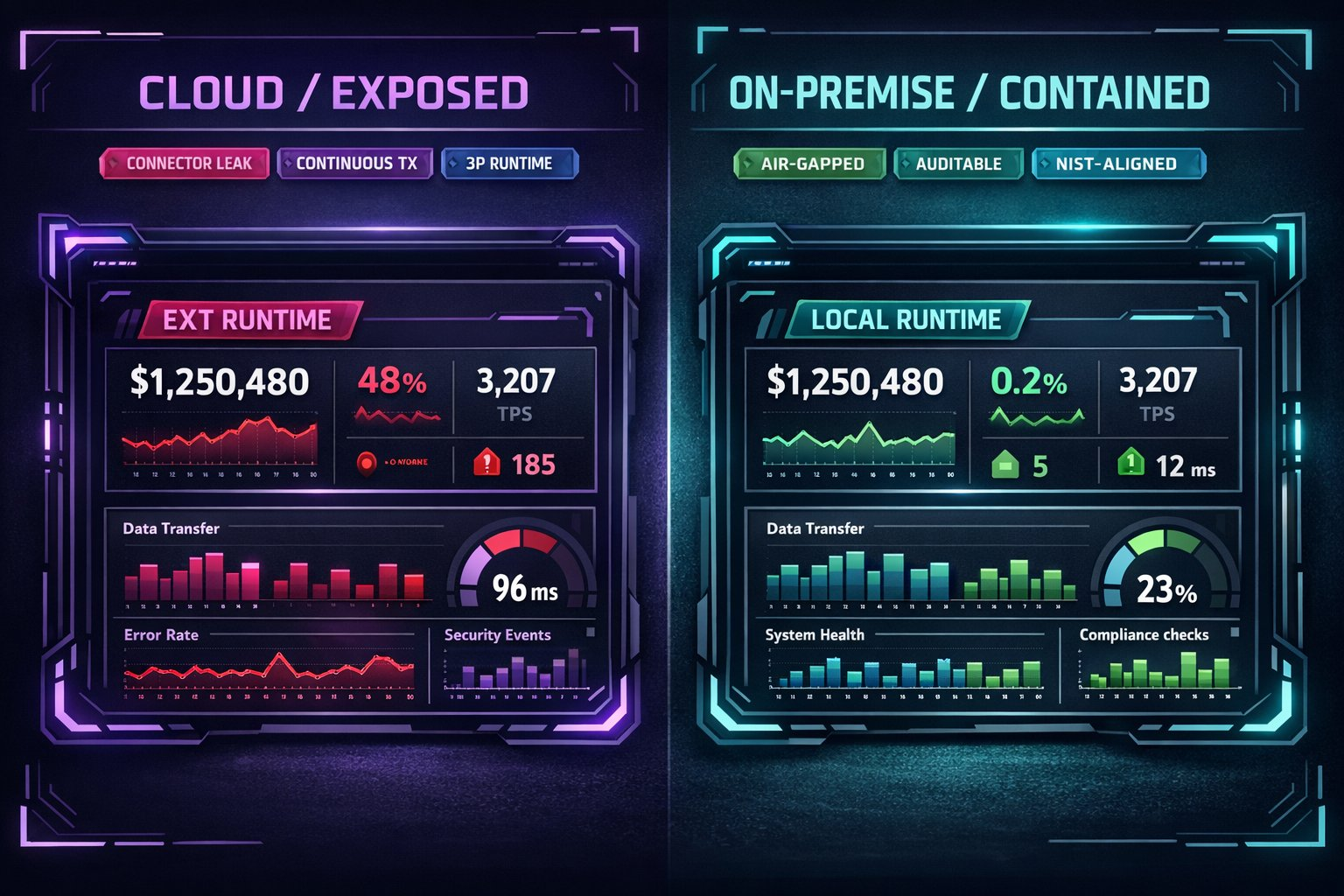

The productivity idea behind Live Artifacts — a persistent, data-connected, LLM-rendered operational view — is the right idea. The question is where the runtime lives. Pivital Systems builds the same capability as a sovereign pattern: local inference, local connectors, local data, and a governance model the organization actually owns.

Architecturally, a sovereign dashboard stack has five layers:

- 01 / Local inference. An on-premise AI server running an open-weights model — typically in the Llama, Qwen, or Mistral families — sized for the organization's concurrency and latency requirements. No prompt, no retrieved document, no dashboard query leaves the network perimeter.

- 02 / Scoped internal connectors. Integrations to the systems of record — email, document stores, ticketing systems, CRMs, ERPs, EHRs — built as audited, pinned services with least-privilege credentials. Each connector's scope is known, reviewed, and changes go through change control.

- 03 / A governed artifact runtime. The dashboards themselves run on infrastructure you control. Refresh logic is deterministic, logging is complete, and the full path from data source to rendered view is inspectable.

- 04 / Observable monitoring. Every query, every retrieval, every generated output is logged to systems your compliance team already trusts. The MEASURE function from NIST AI RMF 1.1 becomes a routine engineering practice rather than a separate reporting exercise.

- 05 / Predictable cost and change control. The cost of the dashboard is the cost of the hardware it runs on, not a per-seat pricing model that scales with usage. Changes happen on your schedule, not the vendor's.

Functionally, a team using a sovereign dashboard sees the same thing a team using Live Artifacts sees: open the view, get the current numbers, ask a follow-up question in natural language, iterate. Structurally, the data never leaves — which is the only architecture that cleanly answers the compliance questions the White House AI Framework, NIST AI RMF, and sector-specific regulators are now asking.

How Pivital Builds This

Pivital delivers sovereign dashboards as part of the same tiered infrastructure practice we use for every other on-premise AI workload. The sizing depends on concurrency and data volume; the architecture is consistent across tiers.

- 01 Standard — $650/mo. The sovereign entry point. Suitable for teams up to ten users who need local inference, a small number of scoped internal connectors, and reliable dashboards over bounded data sources. The right starting point for a regulated team that wants to prove the pattern before expanding it.

- 01 Growth — $1,250/mo. For teams up to thirty users with broader integration needs. Includes eight hours of monthly development time, which is typically used to build custom connectors, refine retrieval logic, and tune the dashboard surface to match how the organization actually works. This is the tier most regulated teams operate at once live dashboards become part of daily workflow.

- 04 Agentic — Custom. For enterprise environments where dashboards are one surface among several agentic workflows. Appropriate when the live view needs to trigger downstream actions, when the organization runs air-gapped or network-segmented environments, or when the compliance surface requires full control over the agent execution environment.

Across all three tiers, the sovereignty guarantee is the same: the model runs on hardware you control, the connectors query systems you own, and the logs live in environments your auditors already trust.

The Question Worth Asking

Live Artifacts are a strong signal about where the industry is going. The future of internal tooling is LLM-rendered, data-connected, persistent, and conversational. That direction is correct. The only structural question is whether your organization can afford — operationally, legally, and strategically — for that runtime to live on someone else's infrastructure.

For organizations without meaningful regulatory exposure, the answer may genuinely be yes. For organizations in healthcare, legal, finance, public sector, or research — or for any organization whose competitive position depends on proprietary data that currently lives in internal systems — the answer is increasingly no. The sovereign pattern is not a rejection of the capability. It is the same capability built on infrastructure you own.

Build Live Dashboards On Infrastructure You Actually Own

Pivital Systems designs, deploys, and operates sovereign AI infrastructure for organizations that cannot send their operational data through a third-party cloud. Whether you need a Tier 1 entry point (01 Standard — $650/mo), a fully integrated team workspace (01 Growth — $1,250/mo), or an enterprise agentic deployment (04 Agentic — custom), we engineer the stack to meet your compliance boundary — not the other way around.

Start an Engineering Conversation →