When the Platform Moves: How Sovereign AI Ends the Constant-Change Tax on Cloud LLM Workflows

Anthropic's May 6 SpaceX deal doubled Claude Code rate limits and killed peak-hour throttling — and every team building on Claude immediately had to re-evaluate workflows they had just finished tuning. That's the cloud LLM treadmill. On-premise AI gets off it.

On May 6, 2026, at its Code with Claude developer conference, Anthropic announced a partnership with SpaceX to use the entire compute capacity of the Colossus 1 data center in Memphis — 220,000 NVIDIA GPUs and 300 megawatts of new capacity coming online within the month. The same announcement doubled Claude Code's five-hour rate limits for Pro, Max, Team, and Enterprise seat-based plans, removed peak-hour throttling for Pro and Max accounts, and significantly raised Opus API rate limits. For teams operating under the March 2026 White House AI Framework and evaluating Sovereign AI Infrastructure, on-premise LLM deployment, and Secure AI for Regulated Environments, the headline number is not the gigawatts. It is the cadence. The platform every cloud-dependent builder is standing on changed again — and even when the change is unambiguously good, the people building on top of it pay the bill.

About an hour into the keynote announcing the new capacity, Claude itself went briefly down for thousands of users. That detail is worth holding onto.

The Anchor: One Announcement, Five Things To Change

Within hours of the May 6 keynote, AI content creators began producing breakdowns of "what builders should do differently now." A representative example, from automation builder Nate Herk's channel, is a nine-minute video titled "Claude Just Solved Session Limits" — walking through the SpaceX deal, the doubled limits, the removed throttle, and a five-item list of architectural changes builders should consider making in response. The video is well made. It is also, structurally, a confession: every time the underlying platform changes, the workflows built on top of it have to be re-evaluated.

The specific changes in this case were genuinely positive. Claude Code's five-hour rate limit doubled for paid plans. The peak-hour throttle that had forced builders to schedule their heaviest workloads around the platform's busy windows is gone. API limits for Opus moved up. Anyone who had architected their pipelines around the previous constraints — queuing systems that delayed work until off-peak hours, splitter logic that broke long sessions into chunks small enough to fit inside the old five-hour window, fallback paths that switched to cheaper models when the Opus limit hit — now has to decide whether to keep that complexity or rip it out. Both options cost engineering time. The change itself was a gift; the response to the change is a refactor.

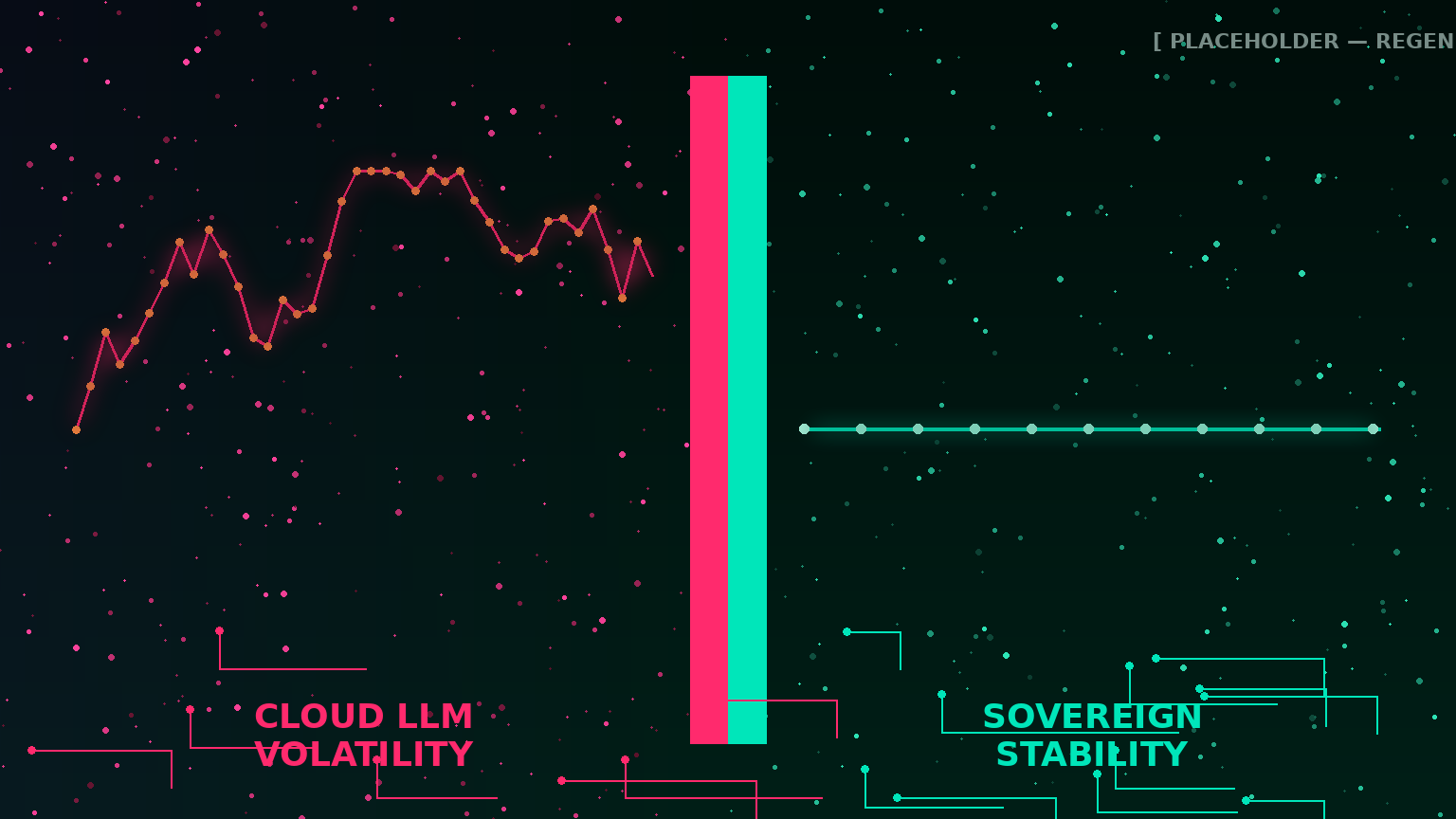

This is the structural condition of cloud LLM development in 2026. The platform is the product, and the product moves.

The Compute Spree Behind the Cadence

The SpaceX deal is one stop on a year-long capacity acquisition tour. Anthropic has now announced an up-to-5GW agreement with Amazon — including nearly 1GW of new capacity by the end of 2026 — a 5GW agreement with Google and Broadcom beginning in 2027, a strategic partnership with Microsoft and NVIDIA worth $30 billion of Azure capacity, and a $50 billion U.S. AI infrastructure investment with Fluidstack. CEO Dario Amodei told the Code with Claude audience that the company grew 80x in Q1 2026 against an internal plan for 10x — a planning miss that you cannot fix incrementally, and that explains the unusual sight of one frontier AI lab signing a deal for the entire data center of a direct competitor.

The point is not that any individual deal is wrong. The point is that this is the rhythm. Capacity comes online unevenly. New deals reset the available headroom for paid plans. Models get deprecated and replaced. Pricing tiers shift. Connectors are added and removed. Each individual change makes the platform better. The aggregate effect is that no architecture built on top of the platform is stable for longer than a quarter.

And the changes are not always upward. In the weeks leading up to the May 6 announcement, paid Claude Code users had quietly run into tighter-than-expected limits — which is exactly the kind of unannounced behavior that motivated the doubled-limit fix. The signal is not "things keep getting better." The signal is "things keep moving."

When Good News Is Still a Refactor

A team running Claude Code in production has a real engineering surface area that responds to platform changes. Each of the May 6 updates carries downstream work:

- Doubled five-hour limit. Any session-splitter or hand-off logic written to keep long agentic tasks under the old window is now over-engineered. Removing it cleans up the codebase; leaving it adds latency that no longer pays for itself.

- Peak-hours throttle removed. Queueing systems that deferred heavy workloads until off-peak windows can be retired. Anyone running cron-based offloads, "wait until 2am Pacific" patterns, or partial-day rate budgets is now scheduling around a constraint that no longer exists.

- Higher Opus API limits. Fallback logic that downgraded to a cheaper model on quota exhaustion can be retuned, removed, or repurposed. Every team has to decide whether the fallback is still cost-justified at the new ceiling.

- New compute partner (SpaceX / Colossus 1). Teams subject to data residency, export control, or vendor due diligence requirements now have a new sub-processor to evaluate. The compliance work is not optional; in regulated environments, a change in where inference physically runs can be a documentation event.

- Brief outage during the announcement keynote. A reminder that the same growth driving the headroom increase is also the source of the platform's reliability volatility. Any team relying on Claude as a critical-path system has to revisit its degradation and failover design.

None of this work is intellectually demanding. All of it is real engineering effort, and all of it has to happen on the vendor's schedule, not the team's.

The Hidden Tax: Why Constant Change Is the Real Cost of Cloud AI

Most published cost analyses of cloud AI focus on the per-token bill. That number is visible, line-itemed, and easy to compare against alternatives. The cost that does not appear on any invoice is the engineering time spent maintaining alignment with a platform that updates itself.

Across the past twelve months alone, teams building on frontier cloud LLMs have had to absorb:

- Model deprecations and replacements. Older model versions retired on the vendor's timeline, with prompts and evaluations tuned to the previous behavior needing to be re-run, re-tested, and sometimes rewritten end-to-end.

- Pricing tier restructures. Plan-level changes that move features between tiers, change the cost of identical workloads, and force renegotiation of internal cost allocation.

- Rate limit policy changes. New throttling rules announced with little notice, sometimes tightened and sometimes loosened — each one requiring the workflows underneath to adapt.

- Connector and integration churn. Third-party integrations added, modified, deprecated, or scope-expanded — each of which changes the data footprint of any workflow that uses them.

- Outage events. Brief platform-wide unavailability, increasingly correlated with major capacity announcements as the underlying infrastructure absorbs new load.

- Compliance surface changes. New sub-processors, new geographic regions, new data-handling defaults — each one a documentation event for regulated teams.

For an engineering organization, the cumulative effect is a maintenance load that is not visible in the budget but is very visible in roadmap slippage. Features that were supposed to ship this quarter slip because the platform shipped first.

What Sovereign Infrastructure Actually Changes

The argument for on-premise AI is usually framed in terms of data sovereignty, regulatory defensibility, and cost predictability. All three remain correct. The May 6 announcement surfaces a fourth argument that is often underweighted: operational stability against vendor change.

When the inference runtime, the model weights, the connectors, and the orchestration layer all live on infrastructure the organization owns, change becomes an internal decision rather than an external event. The architecture has four properties that cloud LLMs structurally cannot offer:

- The rate limit is the hardware. An on-premise inference server has a known, measured throughput. It does not double overnight, and it does not throttle during peak hours. Capacity planning becomes an engineering exercise — sizing concurrency against utilization — instead of a policy-tracking exercise.

- The model is the model you chose. Open-weights models in the Llama, Qwen, Mistral, and Gemma families remain the model you deployed for as long as you choose to run them. Upgrades happen on the organization's schedule, against the organization's evaluation suite, with full ability to A/B test the new version against the old before cutting over.

- The connectors are the connectors you built. Internal integrations to email, document stores, ticketing systems, CRMs, ERPs, and EHRs are versioned services with pinned dependencies and least-privilege credentials. They change when the organization changes them.

- The compliance surface is stable. The sub-processor list is one entry long: the organization itself. The data residency answer is the organization's own data center or co-location facility. The auditability story is whatever logging the organization already trusts.

The downstream effect is that engineering teams stop reading vendor changelogs as part of their job description. The platform is not a moving target. The workflows built on it stay built.

The Reliability Story the Outage Tells

The brief Claude outage during the May 6 keynote — recorded by Downdetector around 11:16 AM ET — is worth one more sentence. It happened to coincide with the moment Anthropic was on stage explaining how the new compute capacity would solve the capacity problem. That is not a criticism of Anthropic's engineering. Outages happen, and the company's growth rate makes them statistically inevitable. It is a description of what any cloud LLM dependency looks like at scale: the same conditions that drive the platform's improvement also drive its volatility.

For internal productivity workloads, occasional outages are tolerable. For workloads on the critical path — clinical decision support, legal drafting under deadline, financial reporting workflows, public-sector case management — they are not. On-premise inference, with predictable hardware and a redundancy story the organization owns, is the only architecture that decouples critical AI work from a third party's growth pains.

How Pivital Builds the Stable Stack

Pivital Systems builds on-premise AI infrastructure specifically for organizations that cannot accept their AI behavior changing on someone else's schedule. The tiers are sized to where the organization is, not where the vendor's roadmap is going.

- 01 Standard — $650/mo. The sovereign entry point. Local inference on a dedicated AI server, sized for teams up to ten users. Suitable for organizations that want stable internal AI workloads — drafting assistance, document review, code review, knowledge retrieval — without subscribing to a moving platform.

- 01 Growth — $1,250/mo. For teams up to thirty users with broader workflow needs. Includes eight hours of monthly development time, typically used to build internal connectors, refine retrieval logic, and integrate the on-premise stack into existing systems. The most common operating tier once an organization is running AI inside daily workflows.

- 04 Agentic — Custom. Enterprise deployments where multiple agentic workflows run against the on-premise stack — research agents, document workflows, code agents, customer service triage. Appropriate for air-gapped or network-segmented environments and for compliance surfaces that require full control over the execution environment.

Across all three tiers, the sovereignty guarantee is operational as well as legal: the model runs where you run it, the connectors query systems you own, and the platform does not change unless you change it.

The Question Builders Should Be Asking

The May 6 announcement was, on its face, good news for cloud-dependent builders. More compute, higher limits, fewer throttles. The fact that those same builders immediately needed videos titled "five things to consider doing differently" is the part of the story worth taking seriously. Improvement and stability are not the same thing. A platform that gets better every quarter is also a platform that requires its dependents to adapt every quarter.

For organizations whose AI workloads are core to how they operate — and especially for organizations in regulated sectors where any change to how sensitive data is processed is a documentation event — the question is not which cloud LLM has the best limits today. It is whether the organization's AI roadmap should be tied to a platform whose limits move on a schedule the organization does not control.

Sovereign AI infrastructure is not a rejection of frontier cloud models. It is the architectural answer to the constant-change tax those models impose on the teams building inside them. The runtime sits where you sit. The platform stops moving. Engineering time goes back to the work it was supposed to be doing.

Stop Building on a Moving Platform

Pivital Systems designs, deploys, and operates on-premise AI infrastructure for organizations that need their AI behavior stable, auditable, and under their own control. Whether you are evaluating a Tier 1 entry point (01 Standard — $650/mo), full team integration (01 Growth — $1,250/mo), or enterprise agentic deployment (04 Agentic — custom), we engineer the stack to your compliance and operational boundary — and then we stop changing it.

Start an Engineering Conversation →